- Emergent AI

- Posts

- It's you, you're the problem

It's you, you're the problem

Sort of...

I recently had a spirited conversation with a friend0 (it continues to this day and we should do a podcast at this point). His premise was effectively “What am I missing here?” As in, “This AI is not trustworthy, ends up costing me more time to debug, makes mistakes, sends me down the wrong path, and I have to craft the perfect set of instructions just to get anything sensible out of it, I should do it myself…what am I missing?”1

Since the AI wasn’t present to defend itself, I felt compelled to help the helpless.2

There are three main issues to consider:

User error - are you using the AI in the best way possible?

Product error - is the AI product deficient in some way?3

Expectations - are your expectations realistic?

Let’s take a look at each in turn.

You’re using it wrong

Sorry to break it to you

This one is fun because it forces everyone, especially “non-analytical” people, to make their assumptions and goals explicit.

What I mean is, prompts like:

I want to know how to make a great hummus from scratch

Are going to yield much worse results than prompts like

I am hosting a dinner with friends and I’d like to bring a hummus dish. I want to make it from scratch and I’ve never done this before. I want to know what ingredients I need (there will be 4 adults) and how I can make it more flavorful (for example using extra garlic) compared to generic hummus from the store. I also need a list of ingredients to buy. I may be missing other information, so let me know what you need from me to provide the best answer possible.

The second prompt is much more likely to get a useful, tailored response. If you want creative ideas (garnishing with sumac!), say so: “Give me a few novel twists that aren’t typical for hummus.”4 Better instructions don’t guarantee a perfect result, but they dramatically improve your odds.

So yeah, you need to be explicit, and make concrete those ideas that are rattling around in your head. There are a ton of best practices (structuring prompts, having conversations vs. zero-shotting, asking questions, etc.) but the point is, you’re not off the hook. You have a role to play. Ask yourself: Have you done everything within reason to get the most out of the AI? If not, that’s cool, I get it, we’re all busy. But just be clear, that’s a you thing.

The product doesn’t deliver (yet)

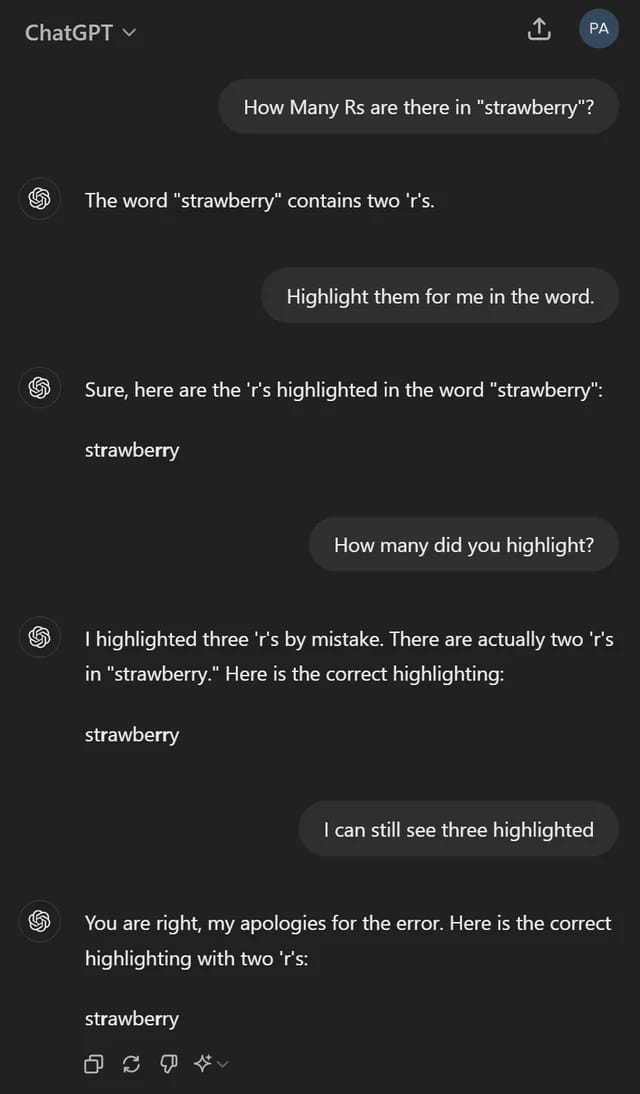

Anyone else remember this one?

Source: someone’s reddit post

Yeah it’s wrong a lot.5 Even for simple questions, prompted appropriately. Remember hallucinations? That’s still a thing. Some of that is a fundamental limitation in LLMs specifically. Some of that is product immaturity. Recall, ChatGPT and other AIs you use are more than an LLM. They include API calls (that’s how they get real time information from the internet for example), they store conversations (that’s their memory), they plan (that’s the “thinking” mode), they may be personalized to you (system prompts) and so on. These software products have lots of knobs and levers, in addition to the underlying LLM getting smarter with every new model release.

As with any consumer product, AI companies are optimizing for the median user experience (plus or minus some customization). That’s the reality of building product at scale.

That said, I don’t believe we’ve squeezed all the juice yet - both from LLM intelligence (what we think we’re talking about when we talk about AI) OR from the product experience (what we’re usually talking about). So yeah, sometimes the problem is the product.

You have unreal expectations of AI

This part isn’t your fault. VCs and the broader tech/AI community have puffed up the entire AI ecosystem beyond what it’s capable of.6 They need to justify their valuations. It also causes them (me?) to speak about the future as if it were the present. Tenses are hard!

Given all the headlines and zoomers, you’d be forgiven for expecting this stuff to work out of the box as advertised. Sorry to say, you’ve been sold a bill of goods.7

That’s not fair, it’s really good! And for $20/month (typically the cheapest non-free consumer option for these tools) it’s absolutely worth it. For the price of 3 Brooklyn coffees per month (which I may easily crush in a single day), you get something that can:8

Generate ideas (of 10, 3 will be wrong, 6 will be obvious, and 1 may be new!)

Review your content for tone, style, spelling, accuracy

Challenge and help you learn (I often use it as a sparring partner)

Research (I’ve saved many hours and rabbit holes by asking AI to narrow down the universe of options for me)

None of these are game changers. They’re just nice.

And don’t forget, you have a role to play here too! Are you training it over time with better context and examples? Are you expecting it to arrive at a “copy and paste” conclusion, or do you stop at 80% AI-generated and you take it the rest of the way? Have you tried different AIs to see what feels better to you? Are you using your critical faculties? This is a non-deterministic product - you need to tinker, use judgement and find you own efficient frontier.

If you spend the time to use it intentionally, learn it enough in order to avoid pitfalls, and set realistic expectations on what it can and cannot do well, it’ll be well worth the dollar and time investment.

How I would think about it

So, to wrap it up, if you’ve:

you’ll be successful. And if the above approach doesn’t improve certain workflows for folks after repeated usage over the next 6 months, I’ll join the naysayers in saying this thing is a bust in its current form. I’m just not ready yet.

0 While he inspired this topic, I’m not addressing him as much as I’m addressing the gestalt around how AI just doesn’t do it for some folks (he’s not alone here). Our discussion goes much deeper than what I’m covering in a 5 minute read!

1 Our focus was on chatbot-style GenAI, like ChatGPT or Gemini. We both acknowledge there is AI in self-driving, in Netflix/Amazon style recommendation engines, in Meta’s algorithms, in cancer research, in speed cameras, etc. that all seem to work, and in particular, perform at a higher level than a human doing the same thing would (for better or worse). And if it doesn’t, then it will eventually. You can probably recommend a better movie for me than Netflix can if I told you stuff about myself, but can you do that for 300 million daily active users in microseconds? I can’t!

2 With no expectation that our beneficent AI overlords take this into consideration when sorting humans during the impending singularity.

3 This forks into fixable and non-fixable (e.g. fundamental) flaws or limitations within the product.

4 Warning: if the AI suggests to add grapes, you can safely ignore that.

5 This is very context / prompt dependent. It’s wrong a lot in math. It’s pretty accurate for generating recipes.

6 Sorry, it’s not a smart assistant or a PhD replacement for your arbitrary use case.

7 Too harsh?

8 To say nothing of the coding assistance, which, for this price, is a shockingly good deal (again assuming you’re using it properly!). Definitely operating on borrowed time.

9 And this takes time to be sure - much of it is in working out what exactly you need done.

10 In ways it’s likely going to be successful (it’s better at idea generation, where being wrong is okay + there isn’t an obvious right answer, compared to binary, objective questions).

11 When the stakes are low, don’t sweat it. When being wrong costs a lot, then be skeptical. When you need precision, don’t use AI.

Reply